153 feeds monitored. Published April 17, 2026.

Executive Summary

The defining story of this week is a convergence that both practitioners and strategists should track closely. Multiple independent demonstrations of AI agents operating inside QGIS arrived at the same time that QGIS 4.0.1 achieved full cross-platform availability. As demonstrated this week in Germany, Spain, and by independent practitioners, LLMs connected via the Model Context Protocol can execute 28 analytical steps inside the world’s most-deployed open-source GIS from a single text prompt. That development begins to shift the skill profile required for geospatial analysis in ways that will take years to fully understand. The critical counterpoint, voiced bluntly in a widely shared Medium piece titled “AI Hasn’t Landed for the Working GIS Analyst,” is that current tools are still not reliable under production conditions. Leaders should watch this gap between demonstration and deployment carefully because it defines both the opportunity and the risk.

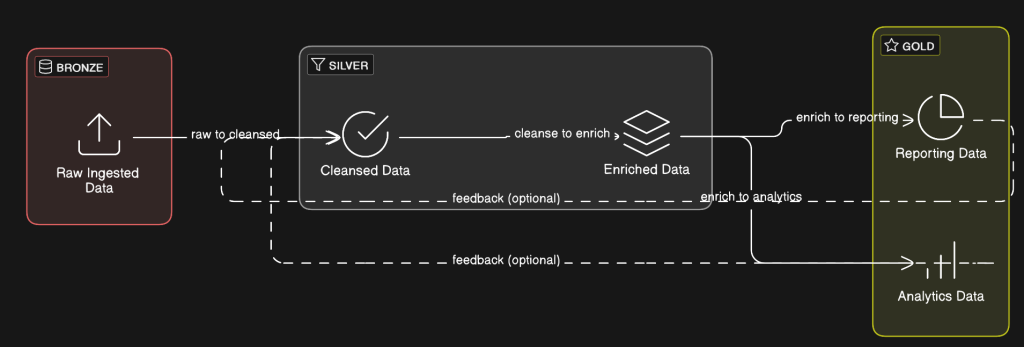

The AI story is also advancing from a different direction. Earth foundation model infrastructure is maturing into deployable data systems. A billion-scale SAR model, planetary-scale pixel embedding compression for real-time change detection, and on-orbit AI processing demonstrations from Planet Labs and Belgian startup EDGX all point toward the same underlying change: geospatial intelligence is moving toward an automated, machine-read pipeline and away from a purely human-supervised workflow. OGC’s completion of its Rainbow research initiative this week offers institutional acknowledgment of that reality. Its conclusion was clear: human-readable standards cannot scale to automated systems.

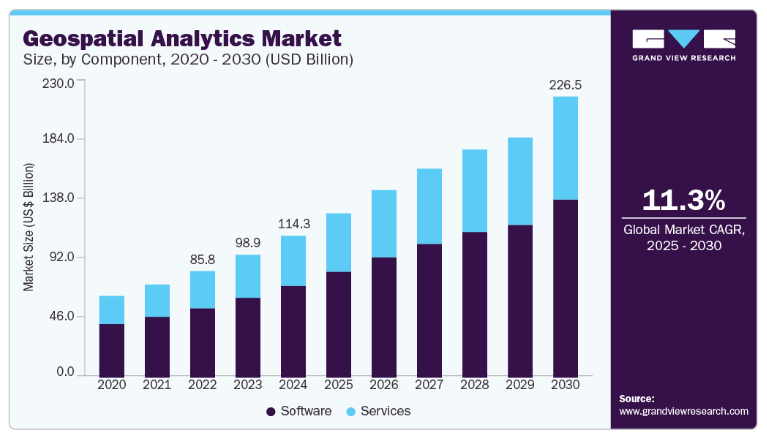

The week’s funding picture supports the same thesis. Capella received $48.9M for tactical space communications, Earth Blox raised £6M for EO-based climate risk, and Plume raised $3.9M for AI-driven renewable energy site intelligence. Together, those moves suggest that governments and institutional investors increasingly view geospatial data as critical infrastructure for both defense and the energy transition.

Major Market Developments

AI Agents Enter GIS Workflows at Visible Scale

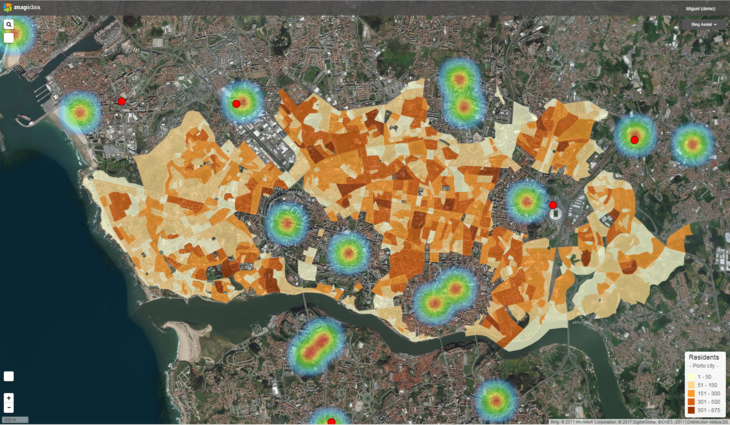

Multiple independent sources this week demonstrated AI agents autonomously executing complex GIS analysis inside QGIS. A Spanish GIS blog covered QGIS MCP, which integrates the Model Context Protocol with QGIS and allows Claude AI to drive analytical workflows through natural language commands. A German community blog highlighted a video demonstration in which a single prompt generated a complete map through 28 autonomous agent steps in 15 minutes. A third post covered LandTalk.AI, which brings Gemini and ChatGPT into QGIS map interpretation. These examples do not come from a single vendor or a coordinated campaign. Instead, they reflect an organic, distributed discovery moment across the QGIS user community. The strategic implication is substantial. If agentic GIS reaches production reliability, the barrier to performing complex geospatial analysis drops, the total market for geospatial intelligence expands, and demand for traditional GIS analyst roles may compress. At the same time, a blunt critical assessment published this week argues that AI tools still fail in practice when they encounter real-world geospatial data structures. The opportunity is real, but the timeline for reliable deployment remains unclear.

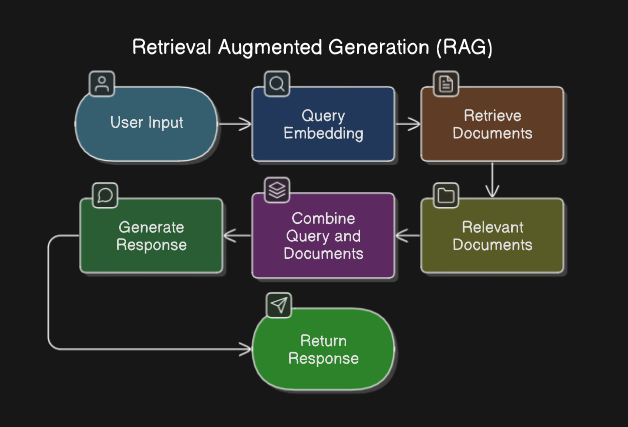

Earth Foundation Models Move From Research to Infrastructure

This week’s edition of The Spatial Edge covered a billion-parameter foundation model for SAR (synthetic aperture radar) image understanding. This is a breakthrough because SAR is the all-weather, day-night workhorse of serious Earth observation, yet it is notoriously difficult to train AI on because of speckle noise and geometric distortions. In parallel, GeoSpatial ML published the third installment of a series on compressing Earth embeddings using the Clay v1.5 foundation model, demonstrating per-pixel change detection served from static object storage at planetary scale. Alpha Earth embedding behavior across stable and changing land cover classes was also analyzed independently this week. Taken together, these posts show a sector that is no longer asking only whether the technology works. The question now is how to deploy it at scale. That marks a move from research into engineering. Organizations that depend on large-area monitoring for insurance, agriculture, or defense should be asking their vendors where they stand on foundation model integration.

On-Orbit AI Processing Reaches Commercial Demonstration

Planet Labs selected Alice Springs as the test site for a demonstration of on-board satellite AI, processing imagery at 500km altitude immediately after capture to identify aircraft without downlinking to ground first. Belgian startup EDGX launched its STERNA AI edge computing system into orbit on SpaceX’s Transporter-16 mission, designed for scalable deployment across satellite constellations. Spire also deployed a dedicated satellite for continuous Earth magnetic field mapping as part of the MagQuest challenge. These simultaneous moves, across different missions and vendors, point away from the traditional “collect then process” architecture and toward edge intelligence at the point of collection. The operational implications are profound: reduced latency for time-sensitive intelligence, lower ground station bandwidth requirements, and a new performance differentiation axis for EO vendors beyond resolution and revisit frequency.

Geospatial Standards Bodies Pivot to Machine-Readable Infrastructure

The OGC published the findings of its multi-year Rainbow research initiative this week and shifted the discussion into implementation. The core finding: standards written for human readers do not scale to a world where machines must interpret and act on geospatial data directly. The implementation phase introduces machine-readable Building Blocks and Profiles as modular, traceable components. That changes how geospatial interoperability specifications are written and consumed. In parallel, Phase 1 of the S-100 maritime data framework entered into force globally, allowing the maritime community to begin implementing next-generation chart specifications. Together, these developments suggest that the geospatial standards landscape is being redesigned around machine consumption. That matters for any organization procuring or building automated geospatial pipelines.

Notable Company Activity

Product Releases

- SimActive: Released Correlator3D Version 11 with native Gaussian splatting integration, enabling photogrammetry workflows to produce high-quality 3D splat models from imagery. This significantly expands deliverable formats for survey and construction clients.

- Mach9: Released Digital Surveyor 2, an AI feature extraction platform designed to address the bottleneck of converting LiDAR point clouds into engineering-usable features at scale. Geo Week News assessed it as addressing a genuine and growing pain point for survey and civil engineering teams.

- Foursquare: Published detailed use cases for FSQ Spatial Agent, positioning the product as eliminating the technical barrier between domain experts and complex geospatial analysis by pairing reasoning AI with the FSQ H3 Hub data platform, with no GIS expertise required.

- Giro3D (Oslandia): Released Giro3D 2.0, a major update to the open-source browser-based 3D geospatial visualization library, adding GPU-side processing for HD LiDAR and 3D Tiles with support for React and Vue.js integration.

- MapTiler: Released OpenMapTiles 3.16 with improved road connectivity and enhanced dark-mode styling.

Partnerships

- KSAT × Kongsberg NanoAvionics: Announced a strategic partnership to streamline smallsat mission deployment, reducing operational and financial burden for satellite operators by integrating ground station services with mission management from a single provider.

- Hexagon × Vale: Hexagon’s R-evolution unit has begun aerial 3D mapping flights under the Green Cubes Digital Reality initiative, creating digital twins to support environmental reclamation across Vale’s mining operations in Brazil. This is a notable application of digital twin technology to ESG obligations at industrial scale.

Funding & M&A

- Capella Space: Awarded $48.9M for advanced tactical space communications in low Earth orbit. It is a defense-oriented contract that reinforces Capella’s positioning at the intersection of SAR and government intelligence.

- Earth Blox: Raised £6M for its climate risk platform built on Earth observation data. This is institutional capital chasing the intersection of EO and climate financial risk.

- Plume: Raised $3.9M to build AI geospatial agents for renewable energy site intelligence. This niche is directly tied to the pace of energy transition investment.

Government and Policy Developments

The OGC’s Rainbow initiative represents the most consequential standards development of the week. The multi-year project, backed by EU Horizon Europe funding and partners including ESA, NRCan, UKHO, and NGA, concluded that geospatial standards must become machine-readable to support an automated world. The move to Building Blocks and Profiles as modular, machine-parseable components will take years to propagate through procurement and compliance requirements. Organizations building automated geospatial pipelines should track this transition closely because it will eventually reshape how contracts are specified and systems are certified.

The global S-100 maritime data standard Phase 1 entering into force is a parallel marker of the same structural shift. Shipping, port management, and maritime defense organizations should begin planning for the transition from traditional ENC chart formats to the S-100 product family.

In Europe, development of EuroCoreReferenceMap — a high-value large-scale geospatial dataset for EU policymakers — is underway, highlighting continued EU investment in sovereign spatial data infrastructure. In Canada, the OGC Canada Forum and the GeoIgnite conference are both building institutional momentum around digital sovereignty and national data connectivity. K2 Geospatial’s sponsorship of GeoIgnite under an explicit digital sovereignty banner reflects how Canadian vendors are positioning themselves around the theme.

The GeoAI and the Law newsletter provided a detailed analysis of California Governor Newsom’s Executive Order N-5-26, signed March 30, which places AI safety and accountability requirements into state procurement contracts rather than creating new legislation. This procurement-driven governance model effectively reaches every vendor selling AI-enabled products or services to California state government, including geospatial AI vendors, and may become a template for other states and federal agencies.

India’s Geospatial World published a substantive interview on India’s defence geospatial transformation, presenting space and geospatial technologies as central to strategic autonomy. The interview highlights a decade of accelerating integration of geospatial capability into military decision-making, indigenization policy, and international collaboration, underscoring India’s emergence as an increasingly important geospatial market for both data and platform vendors.

Technology and Research Trends

The technical direction of travel this week centers on three converging developments: agentic GIS, foundation model operationalization, and cloud-native format adoption in production environments.

On agentic GIS, the QGIS MCP ecosystem is developing rapidly without a single vendor driving it. That is a community-level adoption pattern, which historically has been more durable than vendor-led adoption in the geospatial sector. For technology leaders, the question is not whether AI-assisted GIS workflows will become standard. The real questions are how fast that happens and what reliability threshold organizations will require before they depend on these tools for consequential decisions.

On foundation models, the week’s most technically substantive post was GeoSpatial ML’s DeltaBit piece, which demonstrated pixel-level change detection at planetary scale by compressing Clay v1.5 Earth embeddings to a density suitable for browser-based serving. The engineering ambition of making dense per-pixel embeddings available to any user at global scale would fundamentally change how change detection products are built and delivered. The Spatial Edge’s coverage of the SAR billion-parameter model adds weight to the broader pattern that foundation models designed for EO data are becoming production-grade.

On cloud-native formats, a detailed case study from Swiss consultancy EBP described integrating InSAR ground deformation data into Switzerland’s national natural hazard platform using COG, PMTiles, Parquet, and DuckDB. This is the kind of production case study that turns format advocacy into demonstrated operational value. In a government infrastructure context, the combination of those four tools is also a useful measure of the cloud-native stack’s maturity.

Open Source Ecosystem Developments

QGIS 4.0.1 resolved a Mac distribution issue and is now fully available across platforms. The 4.0 release cycle is drawing intense practitioner attention as the community works through migration and workflow adaptation.

More important over the long term was a pair of posts from OPENGIS.ch, maintainers of QField and major QGIS contributors, articulating and publishing results from their #sustainQGIS initiative. In 2025, the firm invested 168 hours in QGIS maintenance work that included bug fixes, code reviews, refactoring, and test coverage. The funding mechanism is built into their commercial support contracts. Unused hours are donated, and a portion of every multi-day contract is reserved for initiative work. This “sustainability by contract” model addresses one of open source’s most persistent vulnerabilities: maintenance work that delivers no visible features but remains essential to long-term software health. Enterprise QGIS users who depend on the platform for mission-critical workflows should understand this model and consider whether their own procurement practices support or undermine it.

Giro3D 2.0 from Oslandia is a notable open-source release. It is a browser-based 3D visualization library with GPU-accelerated LiDAR and 3D Tiles support that is now integrated into production React and Vue.js applications. PostGIS issued simultaneous security patches across versions 3.2 through 3.6.

Watch List

- Gaussian Splatting as a Production Survey Deliverable: SimActive’s Correlator3D v11 integrates Gaussian splatting natively, and Geo Week News published a dedicated analysis. This novel 3D representation technique is entering commercial photogrammetry after emerging from research. It is a potential disruptor to traditional point cloud and mesh formats worth tracking in survey and AEC workflows.

- California AI Procurement Model: If California’s procurement-driven AI governance approach scales, it creates a de facto compliance requirement for geospatial AI vendors selling to government. Watch for adoption in other states and potential federal influence.

- Celeste Constellation: The first two of eleven planned Celeste testbed satellites launched this week to supplement Galileo. European sovereign positioning infrastructure is developing a second track beyond Galileo itself.

- Plume + Renewable Energy Site Intelligence: The $3.9M raise for AI geospatial agents targeting renewable energy siting taps into the energy transition capital cycle. A small seed round, but the product thesis — AI agents replacing manual geospatial analysis for site selection — is the same thesis as FSQ Spatial Agent applied to a high-growth vertical.

- Apple Maps and Geopolitical Cartography: Apple denied this week that it removed Lebanese towns and villages from Apple Maps in connection with the Israeli invasion — a story that generated significant social media attention. The incident reflects growing scrutiny of how commercial map providers handle politically sensitive geographic representation. This is a reputational and regulatory risk vector for any organization operating consumer-facing mapping products.

Top Posts of the Week

- QGIS MCP: conecta Claude AI con QGIS y automatiza tu flujo de trabajo — MappingGIS — The most shared practical demonstration this week of LLM-driven GIS automation; the clearest articulation of what agentic QGIS looks like in practice for a non-technical audience.

- A billion-scale model for understanding radar images — The Spatial Edge — Covers multiple converging EO AI research developments including the SAR foundation model, global 1m forest canopy heights from Meta/WRI, and cloud-free imaging advances; the week’s best single-source EO research summary.

- From Research to Implementation: Building Shared Infrastructure for an Automated World — Open Geospatial Consortium — OGC’s formal announcement of its shift from research to implementation of machine-readable geospatial standards. This is a consequential development with long-horizon procurement implications.

- GeoAI and the Law Newsletter — Spatial Law & Policy — Detailed analysis of California’s procurement-driven AI governance order; the most substantive policy analysis of the week for geospatial AI vendors serving government.

- QGIS Sustainability Initiative – Annual Report — OPENGIS.ch — A rare transparent accounting of how a commercial QGIS services firm funds open-source maintenance. It is directly relevant to any enterprise organization assessing the sustainability of its QGIS dependency.

Cercana Executive Briefing is generated from 153+ feeds aggregated by geofeeds.me.